Posted by

Michael Lerner

When Google employees talk about the Panda update being run across the web in the next two to four weeks, we all want to understand more about how the algorithm changes might affect the sites we manage. It’s important to look at the details because that’s where we can get the best information on how to flourish in the midst of SEO and page ranking changes.

Historically, Google Panda and Penguin updates have disrupted rankings across the entire web. Panda updates are run on occasion to weed out sites that rank high because they created a lot of backlinks without any authority or legitimate reason to be tied to a specific website. This hurt a lot of sites that were optimized by purchasing sometimes thousands of “spammy” links. Panda will be run again in the next couple weeks according to an announcement by Google’s Gary Illyes at SMX this week. Having referred to it multiple times as a data refresh, it’s probable that previously penalized sites may recover, while others may suffer new penalties.

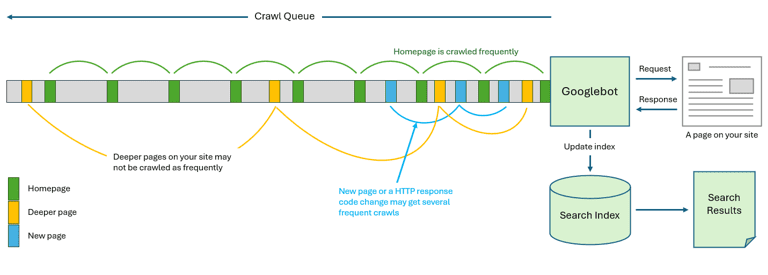

Google’s algorithm that crawls the web is said to have more than 200+ ranking factors; John Mueller indicated during a recent Webmaster Central Hangout, “it’s not exactly 200, we are not counting on a spreadsheet or anything.” The algorithm formula changes every day. Referred to as the “secret sauce” the Google algorithm ranks URL’s and website landing pages for SERPs. Interestingly, Google uses different methods to improve its algorithm and search quality.

One stage of changing the algorithm involves human testers looking at

different search results and choosing which they prefer. These human

testers give their preferences before the algorithm change goes into

many live Internet tests. John mentioned that Google occasionally goes to High Schools and asks people with no experience in SEO or search, what they think of search. He mentioned that “they get some interesting results” when testing search in this way.

Andre’ Morys, CEO & Founder of Web Arts, in his recent Conversion Excellence speech at KahenaCon, said something similar; the best way to test a webpage’s effectiveness is to ask somebody who doesn’t know anything about the webpage subject, even if they don’t know the language of the site, and ask them what they think about the page.

This fresh approach may help, because it’s difficult to chase the algorithm for higher ranking.

Links, keyword stuffing and other older SEO techniques have gone to the wayside. Even something like Click Thru Rate on a SERPs page which has been vaunted as a number one algo factor may or may not be a ranking factor. Gary Illyes, a Webmaster Trends Analyst at Google, said recently that CTR is not a major signal, that CTR can’t be used in an algorithm because all you need is a machine or person clicking on the link – it’s too easy to cheat. Yet SEO experts have tested and said just the opposite, that Click Through Rate is a huge ranking factor. Google takes into account many things when ranking a website, you can work on ranking in one area and it might help you, the algorithm is looking at a broad aspect of a website and works to balance the site as a whole.

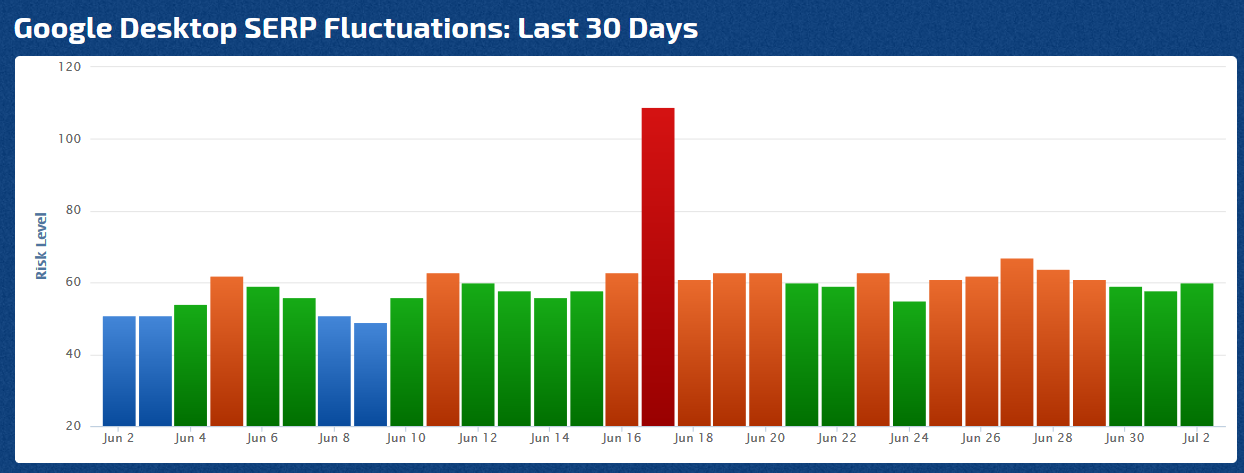

When there are changes in the algorithm, either updates or one time scans of the web, web rankings can change considerably, so keep an eye on our Rank Risk Index.

The truth of Google’s algorithm remains a mystery not only because it’s patented, but also to prevent

cheaters. Fortunately we do have Google’s guidelines, the all important

watchword of the SEO faith, user-experience and SEO experts and digital

marketers who know how to build a website and write good quality content that ranks high and works to

promote and create commerce for its owner.