THE LATEST

SPOTLIGHT

Hot News

- 7 Ways SEO and Product Teams Can Collaborate to Ensure Success

- Google Universal Analytics 360 Sunsetting Soon: Migration Tips & Top Alternative Inside

- Surviving and Thriving in the New Google

- Leveraging Data Cloud for Medical Devices / Blogs / Perficient

- 5 tips on how to use TikTok for your business

IN THIS WEEK’S ISSUE

-

7 Ways SEO and Product Teams Can Collaborate to Ensure Success

Let’s consider the example of developing author pages for a blog. At…

-

Google Universal Analytics 360 Sunsetting Soon: Migration Tips & Top Alternative Inside

This post was sponsored by Piwik PRO. The opinions expressed in this…

-

Surviving and Thriving in the New Google

****: May 14, 2024 1:00 EDT (10:00PDT) Speakers:Vince Ramos, Sales Consultant at…

-

Leveraging Data Cloud for Medical Devices / Blogs / Perficient

From patient records to medical device telemetry, the volume and diversity of…

-

5 tips on how to use TikTok for your business

If you want to reach a younger audience, you need to look…

-

Google Further Postpones Third-Party Cookie Deprecation In Chrome

Google has again delayed its plan to phase out third-party cookies in…

-

Daily Search Forum Recap: April 24, 2024

Here is a recap of what happened in the search forums today,…

-

Google Warns Of “New Reality” As Search Engine Stumbles

Google’s SVP overseeing Search, Prabhakar Raghavan, recently warned employees in an internal…

-

Google Revised The Favicon Documentation

Google revised their documentation on Favicons in order to add definitions in…

-

Google delays third-party cookie phase-out to 2025 (maybe)

Google has postponed the deprecation of third-party cookies in Chrome this year…

-

LinkedIn advertising: A comprehensive guide

Are you seeking to broaden your marketing horizons and harness the vast…

-

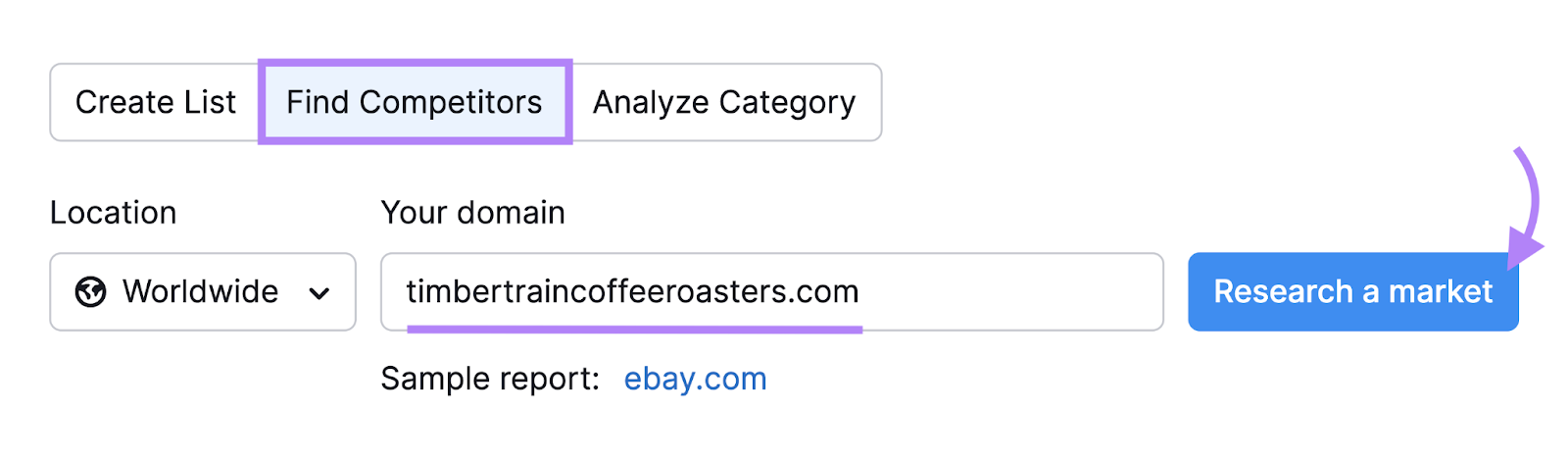

How to Perform a Social Media Competitor Analysis in 6 Steps

A social media competitor analysis involves collecting and evaluating information about your…

AROUND THE WORLD

-

7 Ways SEO and Product Teams Can Collaborate to Ensure Success

Let’s consider the example of developing author pages for a blog. At…

-

Google Universal Analytics 360 Sunsetting Soon: Migration Tips & Top Alternative Inside

This post was sponsored by Piwik PRO. The opinions expressed in this…

-

Surviving and Thriving in the New Google

****: May 14, 2024 1:00 EDT (10:00PDT) Speakers:Vince Ramos, Sales Consultant at…

-

Leveraging Data Cloud for Medical Devices / Blogs / Perficient

From patient records to medical device telemetry, the volume and diversity of…

-

5 tips on how to use TikTok for your business

If you want to reach a younger audience, you need to look…

-

Google Further Postpones Third-Party Cookie Deprecation In Chrome

Google has again delayed its plan to phase out third-party cookies in…

-

Daily Search Forum Recap: April 24, 2024

Here is a recap of what happened in the search forums today,…

-

Google Warns Of “New Reality” As Search Engine Stumbles

Google’s SVP overseeing Search, Prabhakar Raghavan, recently warned employees in an internal…

-

Google Revised The Favicon Documentation

Google revised their documentation on Favicons in order to add definitions in…